Youssef's doctoral thesis — “Adaptive systems for real-time

affective state detection in human-robot interaction using thermal imaging,

multimodal fusion, and contextual understanding” — was

supported by the Wallenberg AI, Autonomous Systems and Software Program

at KTH's Division of Robotics, Perception and Learning. Along the way he

published the first automatic frustration detection paper using thermal

imaging at ACM/IEEE HRI, then the first context-aware affective fusion paper at

RO-MAN, and built the tooling underneath the rest of the field.

That tooling was ROS4HRI — an open-source framework

standardising how social robots handle faces, bodies, voices, and the slippery

problem of knowing which person is which in a crowded room. PAL Robotics

adopted it as the standard HRI toolkit for their entire commercial fleet

— ARI, TIAGo, and the receptionist prototype Youssef helped architect as a

Visiting Researcher in Barcelona.

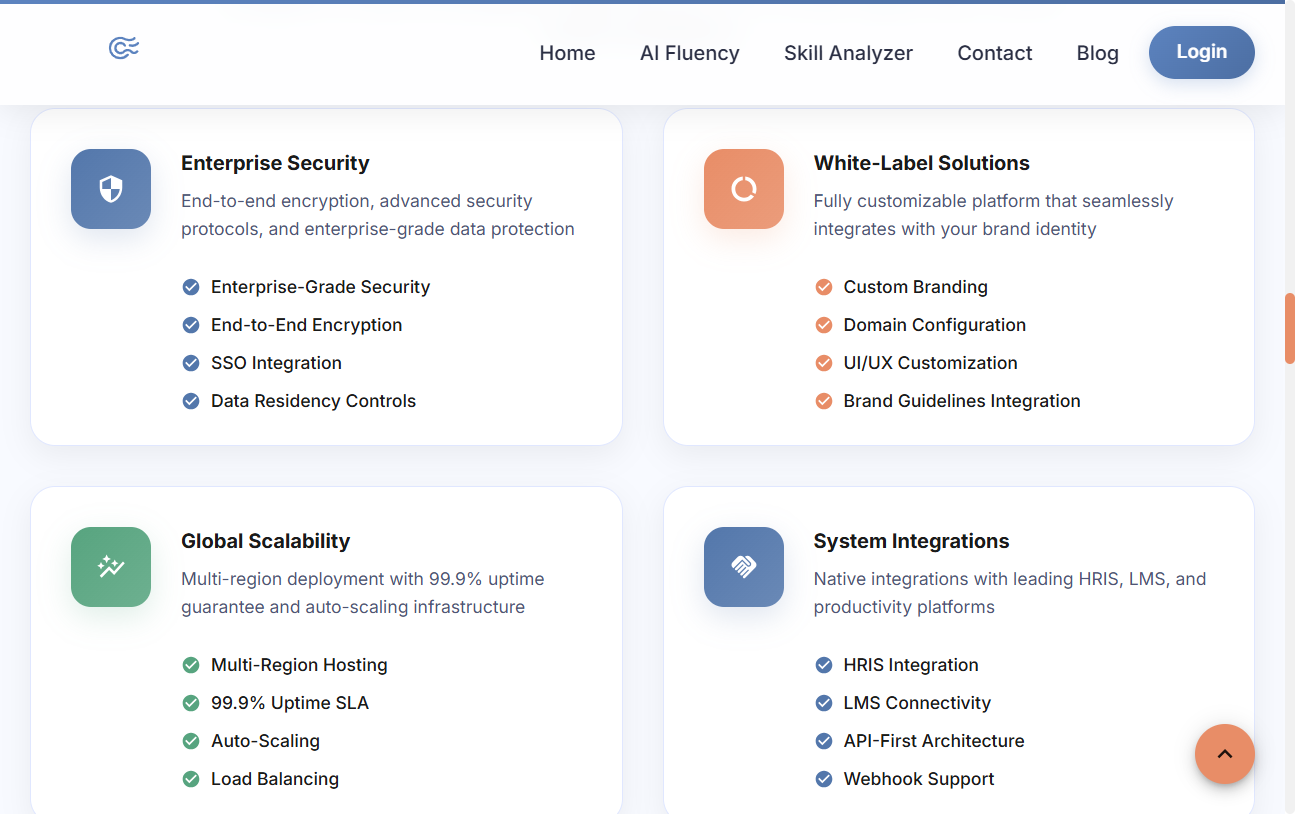

Today he channels the same obsession into companies. Pokamind AB

is the PhD, productised — €43K in Vinnova/Almi grants, €150K in

enterprise clients, and a cross-functional team of 8+ shipping a GCP-backed

SaaS that gives each learner structured, opt-in practice on their own

communication style — then returns a private, explainable report the

learner owns and controls.

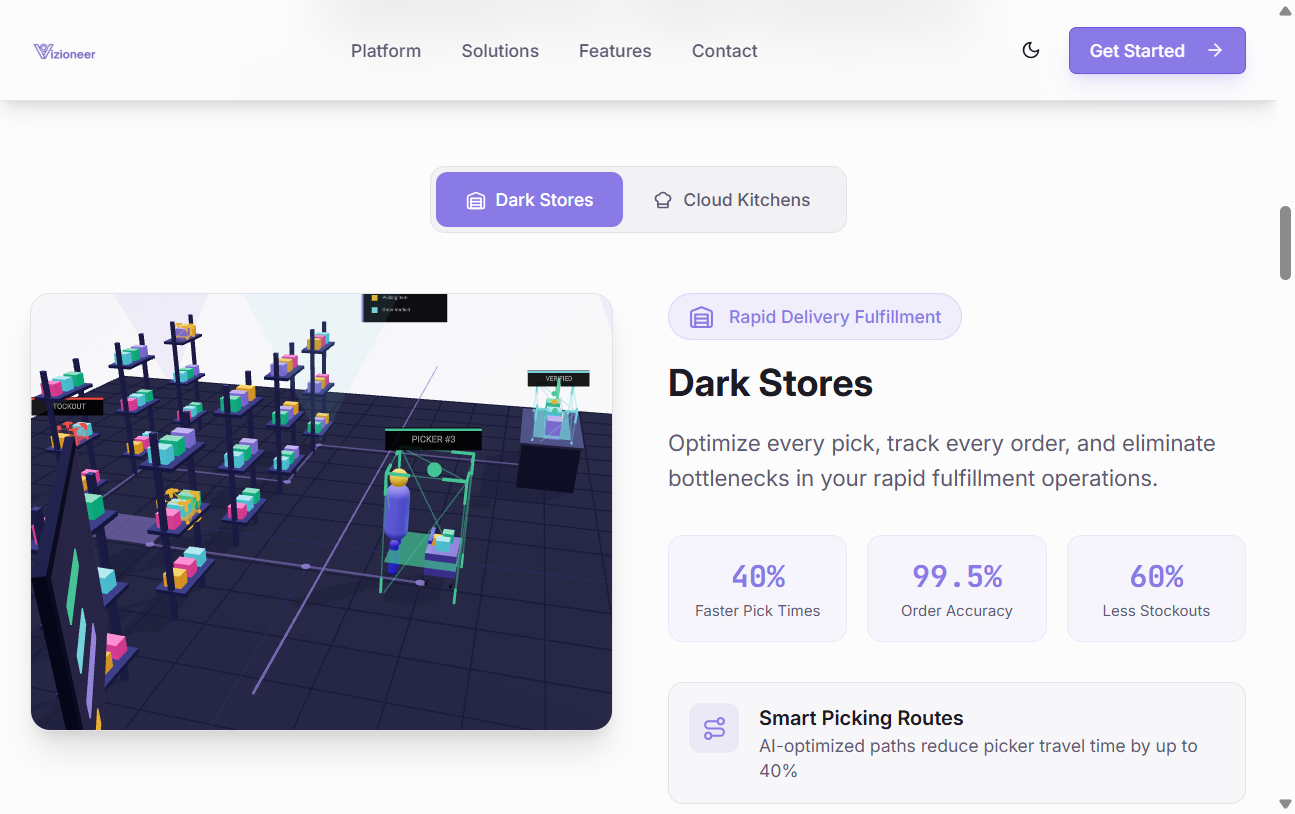

Vizioneer turns existing cameras in dark stores and cloud

kitchens into an operational command centre. HighlightsHub

gives every Sunday-league football match its own highlight reel.

Before any of that, a Master's at Bristol (building a system that could

tell, from across a room, whether two people were actually enjoying each other's

company) and a Fiat-sponsored BSc at Surrey (teaching a light commercial

vehicle to steer itself, politely, around pedestrians). The research hasn't

really changed since. Only the table size.